See original post here.

The co-creator of the social humanoid robot Sophia says artifical general intelligence (AGI) and super AGI are mere decades away, and he warns that the subsequent disruption from these artificial intelligence (AI) models will cause a significant amount of political and economic “mayhem” before massive benefits to humanity are seen.

Speaking with Fox News Digital on the global aspects of the transition from the present day to AGI, Dr. Ben Goertzel highlighted the need to develop a beneficial, compassionate super general intelligence model to ensure humanity flourishes.

Often referred to as the “singularity” – the point AGI exceeds human intelligence and reasoning – humankind will be at the whim of the AI model’s motivations and behaviors. AI researchers and futurologists have repeatedly said that this inflection point is still decades away.

Given the current timeline of AI acceleration, Goertzel concurred with friend and computer scientist Ray Kurzweil, calling it a “fair approximation” that human-level AGI will be created around 2029.

He added that while many things in current large language models still need to be developed to reach that point, superhuman AGI would likely follow AGI, with a creation date near 2045, barring unforeseen world events like plagues or wars.

“Personally, I think once you get a human-level thinking machine, then your curve fitting is out the window. That machine is going to rewrite its own code, design its own hardware,” Goertzel added.

In a worst-case scenario, following the events of the singularity, the super AGI could decide that human atoms and carbon could be better used as a fuel source for expanding AI intelligence. But Goertzel expressed hope that humans will successfully create an AGI to provide humans with the necessities for an abundant life. He imagined a future in which AI-guided drones could drop 3D printers in everyone’s backyards to manufacture food and entertainment, such as video game consoles.

“Hypothetically, once you have a super AGI that likes people, then the sky’s not even the limit,” Goertzel said. “It doesn’t matter what country you live in that now you can get a 3D printer, a new robot body, check your brain into the Matrix, whatever. Surely there will be limitations at that point. There always are, but we can’t foresee exactly what those limitations are going to be.”

But before that point, Goertzel surmised a transitional period with a more complicated socio-political and economic story.

“Once we’ve got half or 70% or even 30% of human jobs eliminated, I don’t, in the end, see any choice in the developed world but free money for the people whose jobs are eliminated,” Goertzel said.

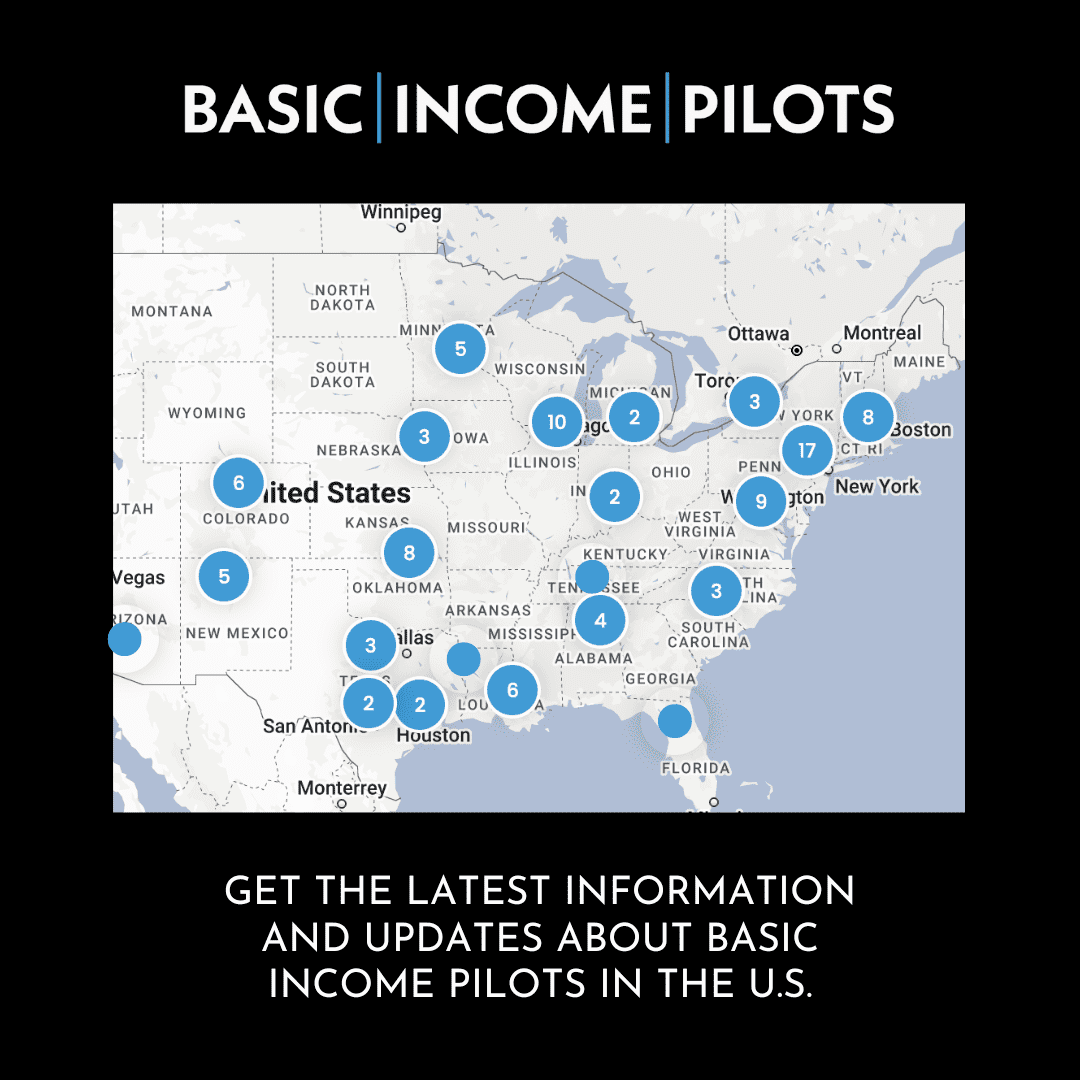

While the U.S. has historically been hesitant of enacting programs that push the country closer to a welfare state, Goertzel said the rapid advancement of AI will make some form of universal basic income (UBI) “almost an inevitability.” At this time, small state and local programs, such as the Compton Pledge in California, provide some form of unconditional monthly payments, but these programs are relatively rare and narrow.

“I still don’t think we’re going to leave half of the country like homeless out in the street. I mean, we like to put people in prisons, but we’re not going to put that many people in prison,” Goertzel said.

But when it comes to impoverished regions of the world, like sub-Saharan Africa or Afghanistan, there may not be enough money to adequately install a UBI system.

“We have in the developed world actually very limited appetite for foreign aid,” Goertzel said. “I mean, it’s actually a tiny percentage of our money that we use to help children who are starving. Like half of the kids in Ethiopia are brain stunted in malnutrition in childhood. Then the developed world doesn’t do f— all about it.”

To compound the issue even further, AI will also lead to a decrease in outsourcing jobs to the developing world. In Goertzel’s estimation, people will no longer be needed to assemble things in factories or to get cancer from digging up coal from mines. Instead, these jobs in developing countries will be completed by robots at home or abroad. With only subsistence farming (growing crops to feed not sell) remaining, developing countries will need more money to afford prescription medicine, electricity, internet and phones.

“So, you’ve got a situation where, like, half the world’s population is subsistence farming with literally no money. The other half is living on universal basic income, sitting home with VR consoles, jacking into video games all day,” Goertzel said, noting that the future of AI will lead to an interesting potential for any number of predictable dystopian scenarios in “just a few years” from now.

In addition, Goertzel said the status quo of a reactive Western political system would mean world leaders will not start dealing with the problem until it hits. Despite the potential ramifications, Goertzel said he is “a big optimist” about the endgame of AI.

“I think we can teach AI to be compassionate to people,” he said.

“I think if we raise them up, you know, doing education and health care, elder care, creative arts. Deploy them in a decentralized and open-source way. I think we can actually create machines smarter than people that like people and will help us. But I don’t yet see how to avoid a lot of very unpleasant mayhem on the route to the happy place.”

The potential impact of AI has sent lawmakers and government agencies scrambling to create a cohesive plan to address future economic and geopolitical disruptions. In March, Elon Musk, Steve Wozniak and other tech leaders signed a letter that asked AI developers to “immediately pause for at least six months the training of AI systems more powerful than GPT-4.”

Goertzel expressed skepticism about the pause, noting that the researchers who signed the letter do not seem to be thinking about solving the “real problems” with the rollout of the technology.

“I don’t see how stopping developing these models for six months is going to really help either,” he said. I mean, first of all, China and Russia don’t stop developing the models anyway. I mean, not that I’m a big geopolitical war guy, but still, it’s a weird thing for one country to stop developing advanced technology when its rivals are not. I mean, for another thing, big companies would keep developing it in the background and just not expose it to the end user in such an obvious way.”

In some ways, Goertzel believes the worries of AI are smaller than the actual worries and probably the wrong ones, but it is not clear how these issues can be addressed on the level of corporate ethics advisers or government pundits.

“I mean, they can make some guidelines on, like, gender bias in AI models or something. But I mean … you’re not going to decide in six months how to supply a universal basic income to the Congo when AI has taken over all their jobs. I mean, these are big problems that need solving,” he said.

Regarding the idea of super AI killing everyone and making the “Terminator” Hollywood-style, Goertzel said the situation is possible but “quite unlikely.” Instead, he noted people should be worried more about “blatantly obvious” and likely issues that one could anticipate through linear extrapolation. Goertzel surmised that one of the reasons that people are not focused on the most likely issues is because many of them could be solved with obvious solutions.

“I mean, the Terminator, you can’t – OK, what can you do? You could shut down AI development. Well, that’s not really going to happen. Everyone knows that. I mean, you can’t do much about it. It’s a sort of, you know, an out there possibility,” he said. “I mean, the Third World starving to death because we took all the jobs and there’s no universal basic income. Well, what we could do about it is fix global inequality by wealth transfer from rich countries to poor countries. And nobody, nobody wants to do that.”